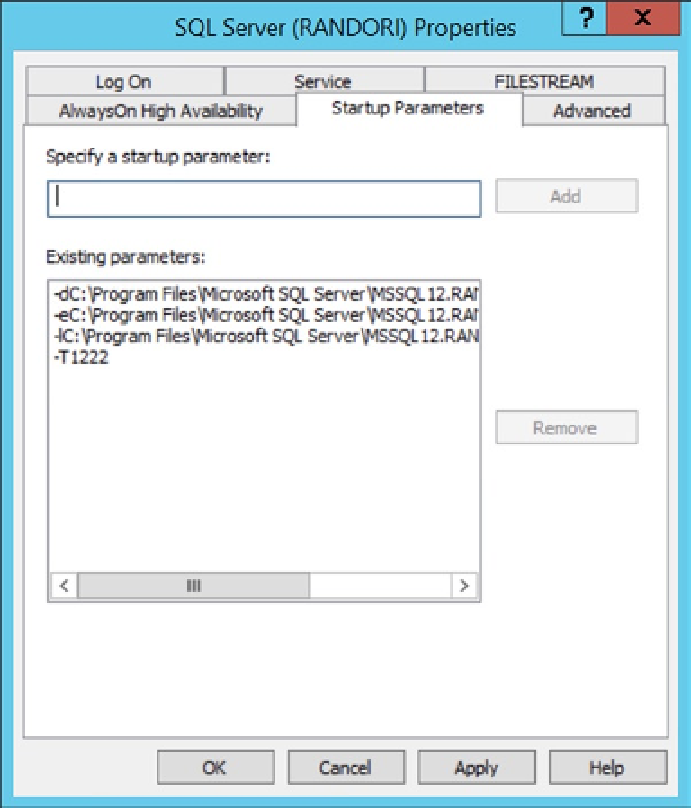

WHERE OBJECT_NAME(st.object_id) like ''change_t%''ĪND OBJECT_NAME(st.object_id) in (SELECT ''change_tracking_'' + convert(nvarchar(255),st.object_id) FROM sys.change_tracking_tables ctt inner join sys.tables st ON st.object_id = ctt.object_id inner join sys.schemas ss ON ss.Recently I was asked about diagnosing deadlocks in SQL Server – I’ve done a lot of work in this area way back in 2008, so I figure it’s time for a refresher. Sys.dm_db_stats_properties(st.object_id, st.stats_id) AS sp

, STATS_DATE(st.object_id, st.stats_id) AS SELECT name from sys.databases where state=0įETCH NEXT FROM database_cursor INTO = 0ĭECLARE cur CURSOR LOCAL FORWARD_ONLY FOR SET NOCOUNT ONĭECLARE database_cursor CURSOR LOCAL FAST_FORWARD FOR Here is the query I run into a job every hour. I've setup a job that updates statistics when more than 100k changes has occurred in my tables. I have some change_tracking tables that get so big that from time to time during the day statistics get outdated and I get terrible plan cache on those tables. #2 : Make sure your statistics are updated on the change tracking tables LEFT JOIN sys.dm_db_partition_stats ps2 on LEFT JOIN sys.objects sot2 on it.parent_object_id=sot2.object_id JOIN sys.objects sot1 on it.object_id=sot1.object_id as tracked_base_table_MB,Ĭhange_tracking_min_valid_version(sot2.object_id) as min_valid_version

SET TRANSACTION ISOLATION LEVEL READ UNCOMMITTED Here is a query to get the size of the change_tracking tables. The bigger those tables get, the more likely you'll get deadlocks while querying them. #1 : Check the size of your change_tacking tables and your retention period Here is a few things that I've done to reduce them. I've been having deadlock problems for years on change_tracking tables. [LOAD_FactTrackedSales_FROM_DI_BIExport_TrackedSales_DS Įxec. Into #toload - must insert into a temp table (not variable) to get parallel capability of the complex queryįrom. Transaction_id, TransactionDateKey, BarcodeNumber, CurrencyKey, VisitRecordKey, MarketingActivityKey, GroupBookingKey, BookingTypeKey, MarketingPartnerKey, Time, VillageKey, BrandKey, LeaseKey, CustomerKey, LocalisationKey, OfferKey, GeographyKey, eVIPTier, BarcodeMetadataSource, GrossBrandSalesTracked, TaxAmount, _AuditKey, _LoadTimestamp, _RecordSource I'm considering forgetting changetable and going back to having an indexed timestamp column for change tracking. I know I could set DOP to 1 but it does make a massive difference to the execution time. But change tracking explicitly says it is needed.

) (so my query is no longer honouring the change tracking) or if I don't run in a "set transaction isolation level snapshot" transaction. It doesn't deadlock if I either remove the where clause on changetable(changes. It is now a pretty simple query left joining to a bunch of tables to lookup Keys and applying a where in clause on changetable(changes. These only have Page Locks in the TempDb (not an exchangeEvent in sight) which makes me think that something else is going on. Now I am getting a different kind of self-deadlock. Originally I was getting some of the traditional Intra-Query Parallel Thread Deadlocks, so I created some cache tables as standard user tables with indexes and pre-populated them to simplify the plans significantly.

As change tracking recommends I've enabled snapshot isolation. I've switched to change tracking for internal database changes and set tablocks on all my inserts. This is on Sqlserver 2016 (.14 - latest SP&CU). I've been trying to optimise a data loading process.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed